Content Optimization: how to write for both humans and robots

Business context

Eco-Counter – a leader in automated pedestrians and bikes counting solutions – has been participating in our private Beta-test program since June 2020. On August 13, 2020, Matt – a seasoned Market Strategist at Eco-Counter – published a blog article about the pedestrianization of downtown Kelowna under the following title: “The summer of giving space to pedestrians – a data-driven approach in Kelowna, Canada”. Matt then asked us to run an Optimization report on this article to know its Score and get some recommendations on how to improve it.

Let’s see where this led us!

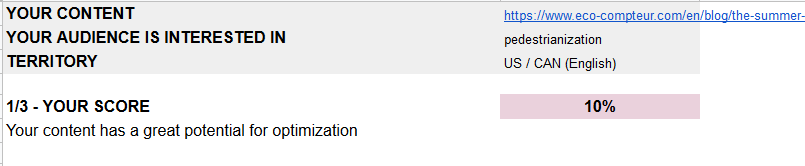

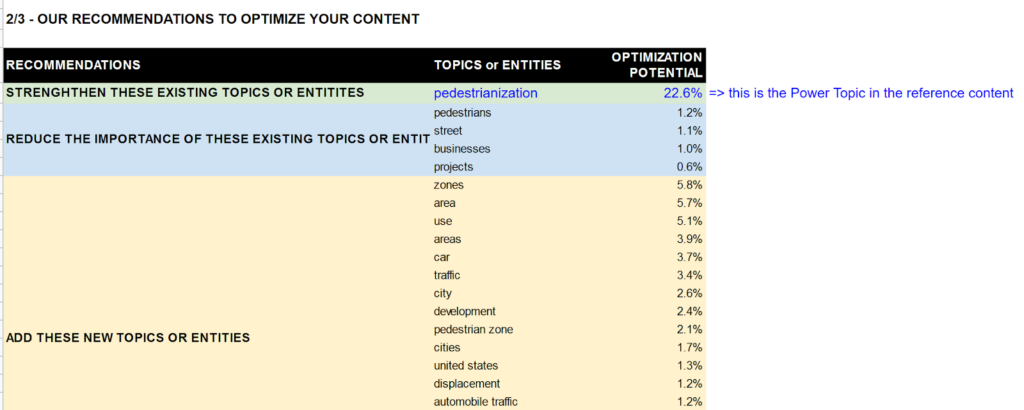

Version 1: 10% - A great potential for optimization

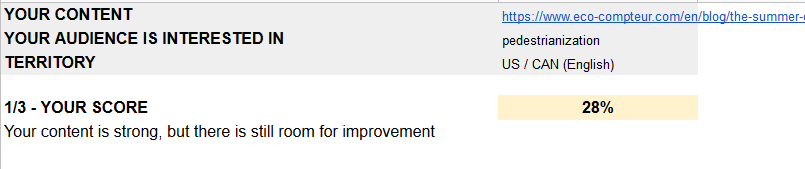

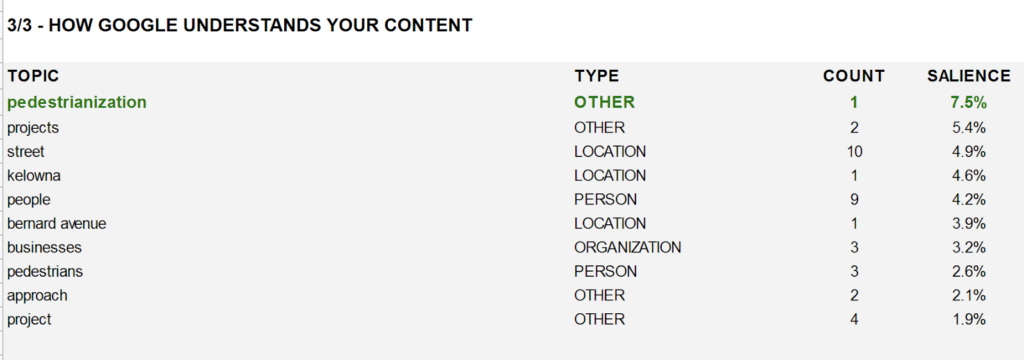

Matt’s original article was very well written and definitely a great content for any human being with an interest in the niche subject of “Pedestrianization”. However, it turned out its Score was rather low, mainly because robots were not able to identify “Pedestrianization” as the article’s main topic.

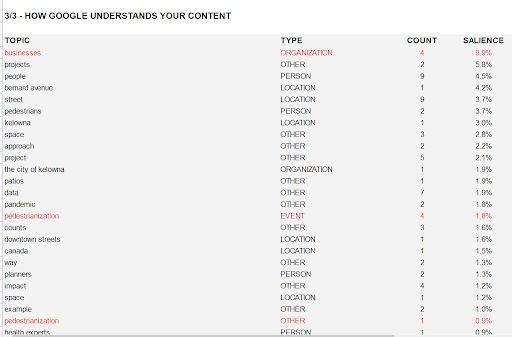

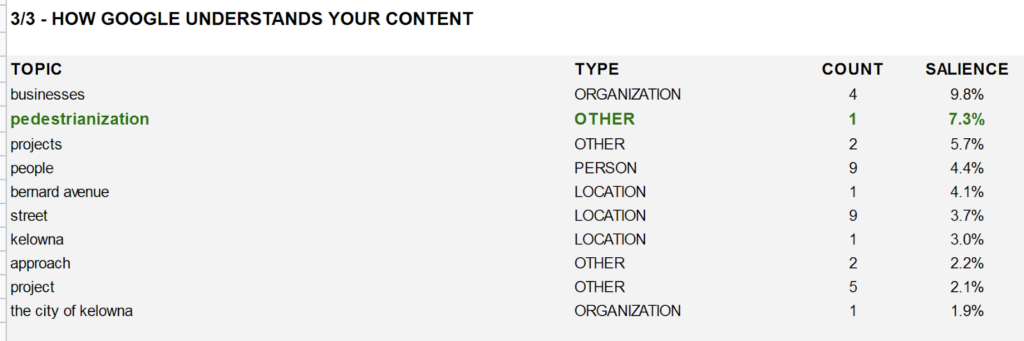

Our reports include a NLP analysis though the paid Google API and it was striking to notice that the “Pedestrianization” topic was ranked only 15th and 24th under two different “Entity types” with a rather low Salience.

Therefore, it was not surprising that our Recommendations engine put the emphasis on strengthening the Topic (or Entity) “Pedestrianization” to improve the content score.

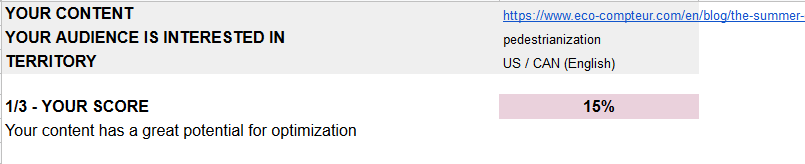

Version 2: 15% - The “title” effect (1/2)

Our #1 objective was to boost “Pedestrianization” salience and make it the article Main Entity.

First of all, we changed the article title (see above) and… the Score went up from 10% to 15% because it was enough to rank the “¨Pedestrianization” topic #2 in the NPL analysis by the Google API!

Version 3: 28% - Much better but not there yet

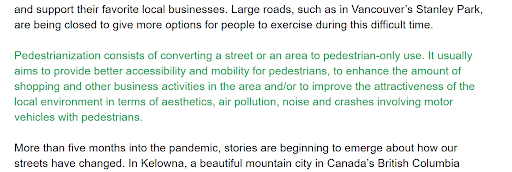

To keep boosting the “Pedestrianization” Entity, we followed a great advice from the SEO expert Ruth Burr Reedy in a great video published in Moz’ blog under the title “Better Content Through NLP” by adding a Pedestrianization definition at the top of the text (actually right after the intro), which made sense for both humans and robots. Also, we made sure to add some recommended new Topics in this definition.

The outcome? A Score of 28% mainly thanks to the new matching Entities between our article and our reference content.

However, Pedestrianization was still not ranked #1 and thus we were not yet fully satisfied

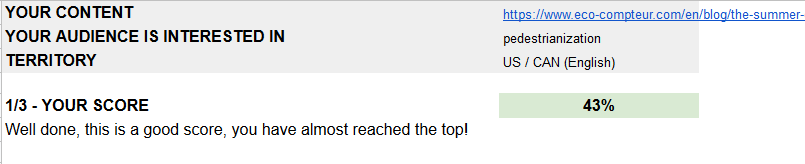

Version 4: 43% - Top Entity at last!

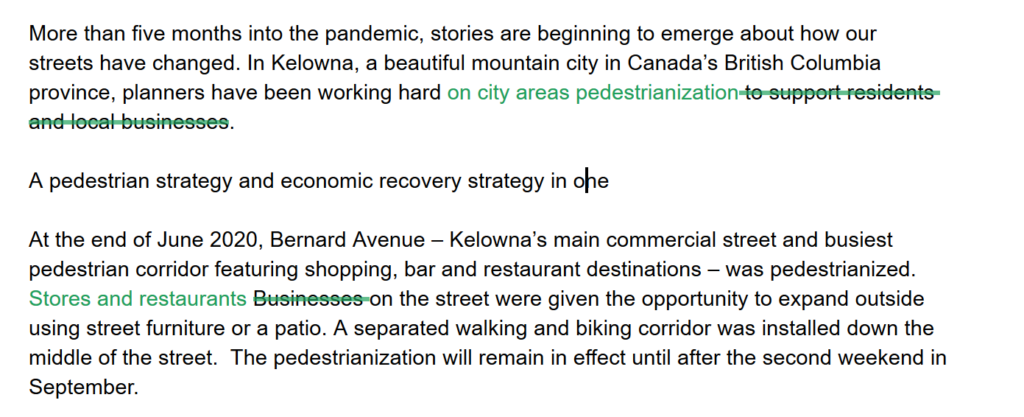

For some reason, the article #1 Entity according to Google NLP API was still “Businesses” despite only 4 minor occurrences and we decided to do something about it:

- We replaced one occurrence by “Stores and restaurants” where it made sense.

- We deleted another occurrence which was not that important for readers.

We did it!

Thanks to these minor adjustments, the “Businesses” topic Salience decreased and “Pedestrianization” finally became the #1 Topic of the article according to Google NLP API, which obviously boosted our Score, up to 43%!

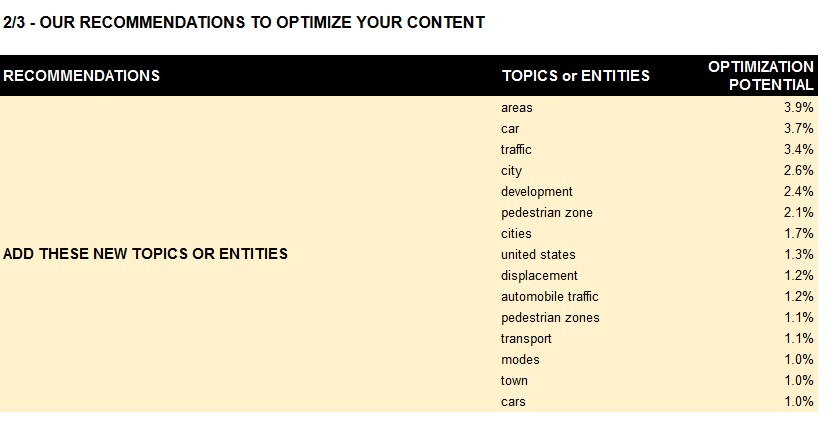

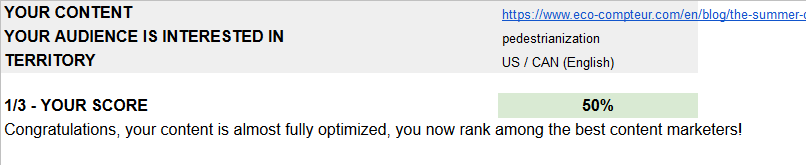

Version 5 : 50% - Top of the World

We consider that once your Score is above 50%, your content optimization work is done, you can focus on other things. To reach that 50% mark, we simply followed the other recommendations from our tool based on their optimization potential. According to the last report, the next best thing to do was to add a few new Topics such as “areas, “car”, “traffic”, “city”, etc – see screenshot below for more details.

Matt’s original article was truly great and it was enough to add a few occurrences of the above suggested Topics to help robots consider them as important in this article. Here are some of the few edits we performed, mainly in the first paragraphs:

We ran another Optimization report and… victory, we finally reached the 50% Score, yeah!

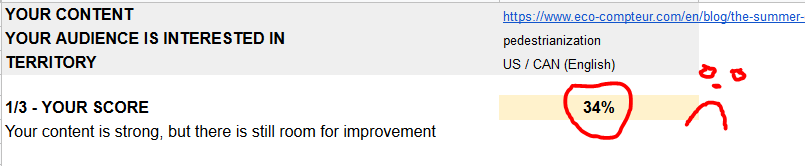

Version 6: 34% - The “title” effect (2/2)

Just for fun, we made one last test with a sixth version of the article: it was exactly the same as the fifth version except we used the original title, i.e.:

The summer of giving space to pedestrians – a data-driven approach in Kelowna, Canada

instead of

Pedestrianization – a data-driven approach in Kelowna, Canada

No need to comment on the version 6 Score, it is pretty straightforward:

Conclusion

Good news is it’s not that difficult to write for both humans and robots. It looks like only a few slight changes can have a great impact on how Google and the other search engines understand your content.

Our advice is simple: write for humans first. Create great content that is truly genuine and useful for your audience. Forget about first grader basic sentences to comply with robots’ allegedly poor capabilities. Natural Language Processing (NLP) makes robots as smart as humans as long as you respect a few rules such as:

- Your article title is key, it should include your main topic

- Write about your main topics in the first paragraphs

- Add a definition about your main topic if there is any risk of ambiguity for search engines

For more details, please refer to Franck’s post about “how to write effectively”.

It’s time to take advantage of NLP, Entities and Salience. Please feel free to share your own experience in the comments below, we are eager to get your feedback on these hot new topics.